Key Takeaways

• Agentic AI in healthcare is moving from helping with tasks to taking action in live operational workflows.

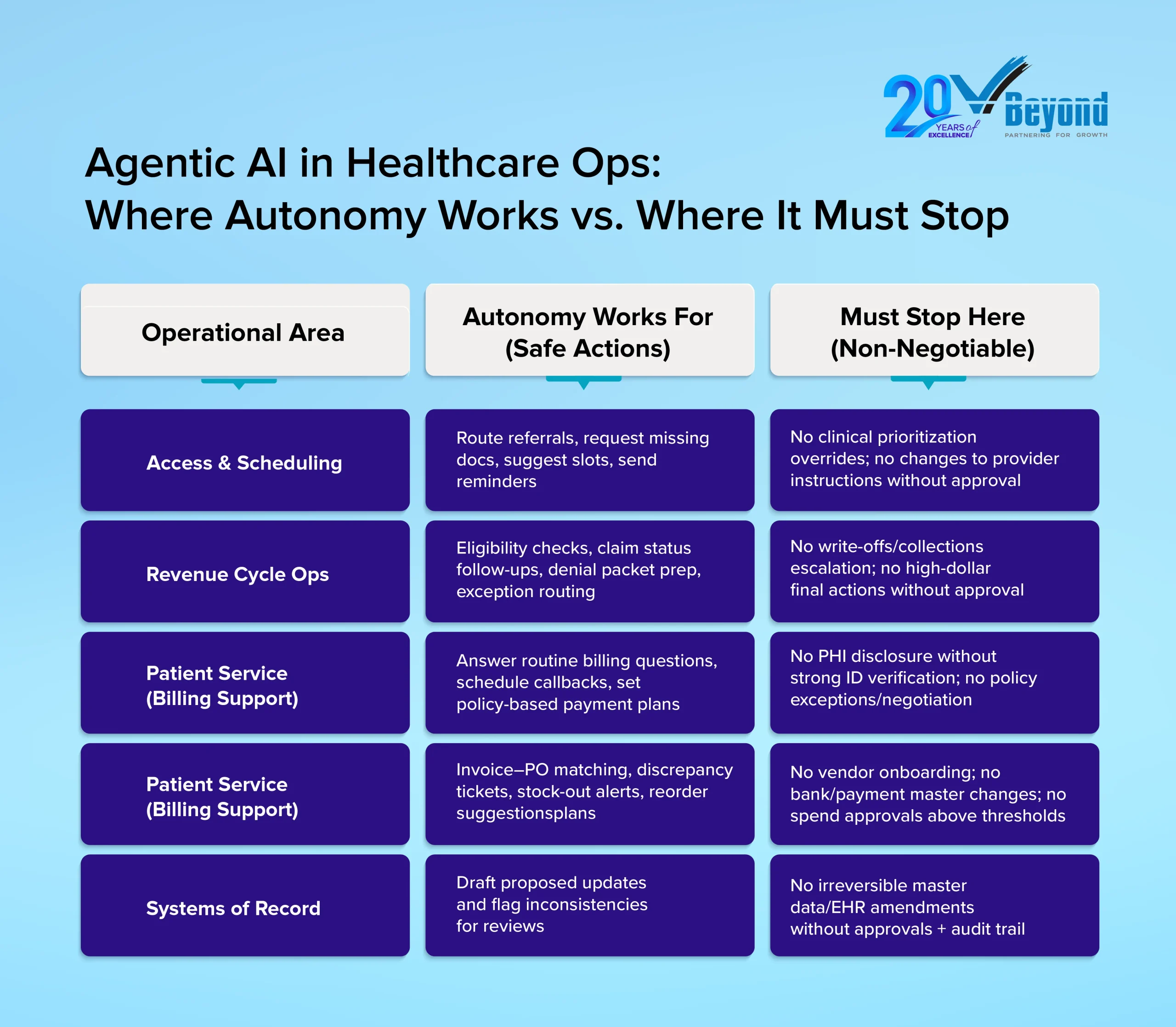

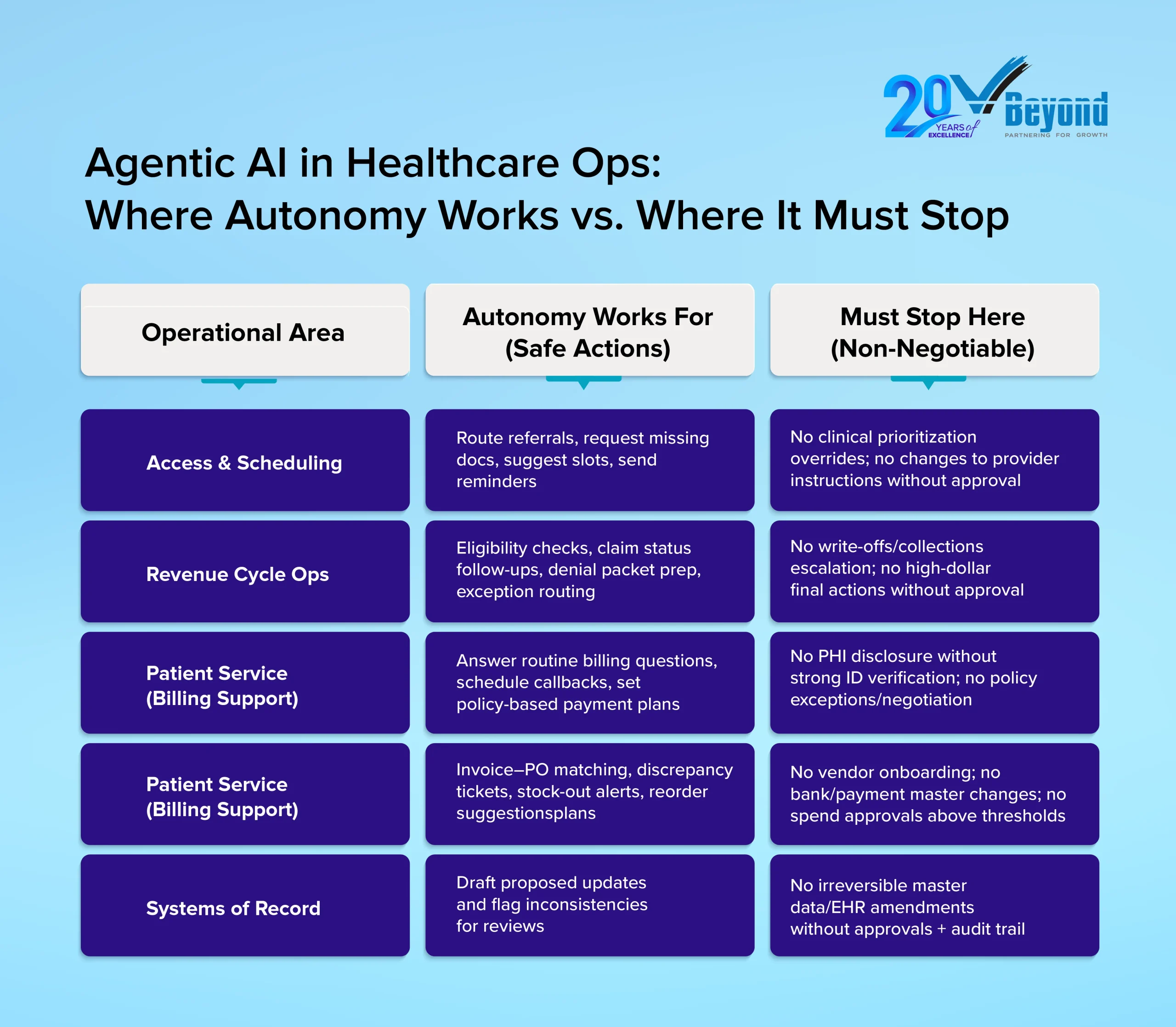

• Autonomy works best in bounded, rule-based areas such as scheduling, revenue cycle pre-work, patient service, and supply chain support.

• It must stop where decisions can affect clinical care, change records permanently, expose sensitive data, or create major financial risk.

• The value comes from narrow permissions, clear guardrails, strong audit trails, and people who oversee exceptions and ensure accountability.

Introduction: The Autonomy Question is Now Operational, Not Theoretical

The question facing healthcare operations leaders in early 2026 is no longer whether AI can summarize a prior authorization request. It is whether AI can act on it, and under what conditions that action is safe.

Agentic AI in healthcare is now operational. Gartner research shows that all surveyed health plan organizations have already implemented or plan to deploy agentic AI by 2028. McKinsey estimates that employees currently spend 20-30% of their workday on nonproductive administrative tasks that add no direct value to care delivery. Pressure to reduce that friction across access management, revenue cycle, service operations, and supply chain is real and growing.

But so is the exposure. Healthcare operations run on patient safety, compliance integrity, and financial accountability. A misrouted claim, an unauthorized record change, or an unverified disclosure can trigger regulatory violations or direct patient harm. Tolerance for errors driven by uncontrolled AI autonomy sits close to zero.

The core question is not “how smart is the AI?” It is “what is it authorized to do, and what happens when it hits a boundary?”

This blog breaks that decision down practically. It covers where agentic AI works and where it must stop, the minimum controls that make it safe, and what it means for the people and roles organizations need to hire and build going forward.

What “Agentic AI” Means in Healthcare Operations

Most AI tools in healthcare today function as copilots. They draft a prior authorization summary, flag a missing document, or surface a billing code recommendation. The action still belongs to a human. Agentic AI changes that structure.

An AI agent does not just recommend. It plans a sequence of steps, accesses relevant systems within its permissions, executes tasks, and routes exceptions to humans when it hits a defined boundary.

McKinsey describes AI agents as “virtual workers that can work independently once given a specific goal, details on tasks, guardrails to work within, and existing tools to implement tasks.” The key word is guardrails, not independence.

A practical autonomy scale for healthcare operations:

- Suggest: The agent generates recommendations only; a human decides and acts.

- Prepare: The agent drafts outputs and gathers required documents; a human reviews before action.

- Act with approval: The agent executes a task only after explicit human sign-off.

- Act within limits: The agent completes a workflow end-to-end, strictly within predefined thresholds, with automatic routing to humans for anything outside those limits.

Autonomy is not a technical property. It is a permission decision that operations and compliance leaders make in production design.

Where Autonomy Works in Healthcare Operations

Autonomy works best in healthcare operations when the work is high-volume, rule-based, and easy to audit. These are the processes where teams spend hours collecting missing details, checking status, and routing cases to the right place. In these areas, Agentic AI in Healthcare can reduce repeat follow-ups and shorten cycle times, while humans stay responsible for judgment calls and exceptions.

A) Access, Referrals, and Scheduling Coordination (non-clinical)

Access work often stalls for simple reasons. A referral packet is missing an order. A demographic field is blank. A specialist office needs one more report before they can schedule. Staff members spend time chasing the same items across phone calls, faxes, portals, and inboxes.

An agent can take on parts of that coordination. It can detect missing referral elements, request missing documents through approved channels, and route work to the right queue. It can propose appointment slots based on stated constraints and send confirmations and reminders. These steps support healthcare operational efficiency because they remove delays that do not require clinical judgment.

The stop line: The agent should not override clinical prioritization rules, change provider instructions, or reclassify urgency without approval. Those decisions can affect patient safety in healthcare and must remain human-led.

B) Revenue Cycle “Pre-work” and Exception Routing

Revenue cycle AI is among the most active deployment areas in healthcare right now. In 2025, more than 30% of providers prioritized AI and automation across seven specific revenue cycle use cases, compared with four to five in prior years. McKinsey projects that AI-enabled revenue cycle management could reduce cost to collect by 30-60% while accelerating cash realization.

An AI agent can handle “pre-work” steps where evidence and policy rules guide the process. It can run eligibility checks, kick off benefits verification workflows, and monitor claim status updates. It can assemble denial packets by gathering required documents and drafting appeal templates for human review. It can route cases based on denial reason, so the right team sees them first.

The stop line: An AI agent should not submit final actions for high-risk or high-dollar cases without approval. It should not trigger write-offs or collections escalation. Those choices can create material harm and require human authority.

C) Patient Service Operations Within Policy

Billing and service calls often follow patterns. Patients ask for balances, payment options, due dates, and claim status. Many calls end with the same needs: a clear explanation, a standard payment plan, a callback, or a transfer to the correct team.

An agent can answer routine billing questions using approved knowledge, set up payment plans within defined offers, and schedule callbacks. It can update demographics through verified flows and route complex cases to human agents. These steps can improve call handling time and increase containment for simple issues, which supports AI in healthcare benefits and challenges discussions in a grounded way.

The stop line: No sensitive disclosure should happen without strong identity verification. No negotiation should happen outside policy. If the workflow involves PHI disclosure or exceptions, it should be routed to a human reviewer.

D) Supply Chain and Back-office Reconciliation

Supply chain and back-office teams deal with structured records and constant variance. Invoices do not match purchase orders. Quantities differ. Prices change. Contracts include thresholds and compliance triggers. Much of the work is matching, flagging, and routing.

An agent can reconcile invoices versus POs, flag price or quantity discrepancies, and open tickets for resolution. It can monitor stock-out risks and suggest re-order quantities based on consumption signals and stated rules. It can track contract compliance triggers, so exceptions reach the right approver.

The stop line is clear. The agent should not onboard vendors, change bank or payment master details, or approve purchases above thresholds. Those actions require segregation of duties and human sign-off.

Across these four areas, one theme holds. Autonomy succeeds when organizations redesign work around exception handling, not when they try to automate every edge case.

For smarter hiring support, partner with VBeyond Corporation.

Where Autonomy Must Stop (and Why)

Agentic AI creates operational value when it stays within bounded, policy-governed workflows. But there are four categories of decisions where human authority is non-negotiable, regardless of how well an agent performs on routine tasks.

A) Clinical Judgment and Patient Safety Decisions

Diagnosis, triage, treatment selection, discharge readiness, and medication changes are human responsibilities. AI agents in healthcare operations must not touch these decisions. As McKinsey notes, human-in-the-loop oversight remains essential wherever AI intersects with clinical operations. An agent that coordinates a referral packet is not the same as one that determines clinical urgency. That line must hold.

B) Irreversible Changes to Systems of Record

Master data edits, credentialing status updates, EHR record amendments, and payer enrollment changes carry legal and operational weight that cannot be undone with a single correction. These actions require explicit approvals, segregation of duties, and a documented audit trail. No agent should execute them autonomously.

Deloitte’s guidance on AI governance in healthcare is direct: governance must enable traceability, explainability, and clear ownership for decisions and their downstream effects.

C) Identity, Consent, and Sensitive Disclosure

Healthcare operations handle PHI every day. No disclosure of sensitive details should happen without strong identity verification and logged proof. The same rule applies to consent capture and preference changes. If verification is weak or unclear, the agent should stop and route the case to a person. This is a core part of Agentic AI compliance and compliance healthcare.

D) Material Financial Harm Decisions

Autonomy must stop at actions that can cause significant financial harm, such as denials, collections escalation, refunds or write-offs beyond policy, and high-dollar exceptions. An agent can gather evidence, draft letters, and route cases, but humans should approve decisions that change financial outcomes.

Scrutiny is also shifting toward proof. Auditors and risk teams will care about who had authority, what checks were applied, and what evidence exists in logs. Gartner has warned that many agentic AI projects will be canceled when business value is unclear or risk controls are inadequate, which reinforces why these stop-lines matter.

The Minimum Guardrails That Make Autonomy Safe

Most AI programs do not fail because the model is weak. They fail because the permission design is too broad, the policy logic is vague, or the exception path is missing. The strongest early results come from narrow, permissioned autonomy.

Five controls that define the operating boundary:

- Least-privilege permissions: Each agent accesses only the tools and data needed for its specific workflow. Nothing more.

- Policy gates built into execution: Thresholds, restricted action lists, and approval steps sit inside the workflow itself, not in a document no one reads at runtime.

- Full auditability: Every action is logged with a timestamp, data source, policy checks passed or failed, and approver identity where required. Deloitte’s guidance on AI governance in healthcare is direct: traceability and explainability are non-negotiable.

- Exception handling: Clear queues for edge cases keep humans informed of what the agent could not resolve. Autonomy should reduce noise, not hide risk.

- Fallback mode: When confidence is low or systems are unavailable, the agent stops and routes to a human. No improvisation on ambiguous inputs.

Without these controls, autonomy is not a feature. It is a liability.

What Changes for Talent, HR, and Recruiting

Agentic AI in healthcare operations does not simply replace repetitive tasks. It redistributes where human judgment is required. The roles that matter most going forward are not the ones running transactions. They are the ones governing, auditing, and designing the workflows that agents execute.

Deloitte’s 2026 Global Human Capital Trends report signals this shift clearly: organizations that align AI deployment with deliberate workforce restructuring see better outcomes than those that treat AI as a headcount reduction tool. For HR leaders and hiring managers, that distinction shapes what to build and who to hire.

Roles That Are Emerging

These are concrete, hire-able functions, not conceptual titles:

- Agent Supervisor / Ops QA Lead: Monitors exception queues, audits agent outcomes, reviews action samples, and manages escalations. Role requires operational experience, not AI engineering credentials.

- Workflow Owner (Ops Product Owner): Owns KPIs and defines permission boundaries. Decides what the agent can and cannot do, and when thresholds need revisiting.

- AI Controls and Audit Analyst: Validates logs, permissions, approval trails, and compliance evidence. Critical for AI governance in healthcare where regulatory exposure is high.

- Operations Process Engineer (AI-enabled): Redesigns workflows, so agents reduce human involvement in routine steps while humans retain full ownership of exceptions.

Workforce Planning Insight

Agentic AI reduces repetitive follow-up work and increases demand for exception handling, quality assurance, and controls oversight. McKinsey’s healthcare workforce research confirms that AI shifts the skill premium toward roles requiring judgment, accountability, and cross-functional coordination, rather than task execution.

Hiring plans should rebalance toward supervisors, workflow owners, and audit-ready operations analysts. AI handles the volume. Humans govern the outcomes.

Understanding how agents and humans share work is just as important as knowing where agents must stop. (For broader context on how agentic systems are reshaping roles and collaboration inside organizations, read our blog Agentic AI and the New Shape of Work: Rethinking How Humans and AI Collaborate.)

Conclusion: A Short Autonomy Checklist Leaders Can Reuse

Before any AI agent goes into production in a healthcare operations environment, the answers to these questions should already be documented:

- Is the workflow bounded, auditable, and reversible?

- What are the explicit stop-lines: clinical risk, irreversible record edits, identity and consent, material financial impact?

- What permissions and thresholds limit what the agent can act on?

- What approvals are required, and who owns the exceptions queue?

- What logs exist to prove compliance and accountability after the fact?

- What roles are in place to supervise autonomy: QA, workflow ownership, controls?

These are not implementation details to finalize post-deployment. They are the conditions that make deployment responsible in the first place.

Healthcare operations move fast, and the pressure to automate is real. But the organizations that will build lasting, defensible AI programs are not the ones that move fastest. They are the ones that design authority as carefully as they design capability.

Building the operations team that can govern AI the right way starts with the right hire. Connect with VBeyond Corporation to find experienced, audit-ready talent for the roles that keep your workflows compliant, accountable, and built to last.

FAQs

1. What is agentic AI in healthcare operations?

Agentic AI in healthcare operations refers to AI systems that can do more than assist. They can plan steps, complete tasks inside workflows, and route exceptions to humans when a case falls outside approved limits.

2. How is agentic AI different from healthcare AI copilots?

A copilot helps by drafting, summarizing, or recommending next steps. An agent can take action inside a workflow when permissions, rules, and approvals are already built in.

3. Where can agentic AI safely operate in healthcare workflows?

It works best in high-volume, rules-based operational tasks such as scheduling support, claims follow-up, benefits verification, patient service, and back-office coordination. These areas are safer because actions can be checked, logged, and reversed when needed.

4. Where should AI autonomy stop in healthcare operations?

AI autonomy should stop at clinical judgment, irreversible record changes, identity and consent actions, and major financial decisions. These areas need clear human authority because the risk is too high.

5. Can agentic AI improve healthcare revenue cycle management?

Yes. It can help with eligibility checks, claim status follow-up, denial packet preparation, and exception routing. It improves speed and reduces manual work, but high-risk or high-value final actions should still stay with human reviewers.